Why every organization should make it easy to report security flaws

Last year, I had dinner with a friend who works in the cybersecurity field. They recounted a recent conversation they had with a corporate executive where I came up by name, and the executive apparently visibly shuddered, as if in revulsion. The executive told my friend that one of their fears is someday getting an email from me.

The implication, my friend told me, was that the executive had "f—ed up somehow if he's reaching out."

As a cybersecurity journalist, it's not uncommon for folks to contact me after discovering a security bug or an active data leak. At TechCrunch, where I oversee our cybersecurity coverage, I have a triage-like system that allows us to attempt to resolve flaws when there is a likely chance that we will end up covering it as a story. To meet that bar for coverage, there has to be a public interest in the issue, such as in a significant or well-known product or service; the bug has to be of such simplicity or severity that it could be exploited with ease or at scale; or, that the issue affects a lot of people or is critical to national security.

Over the last year or so, I've noticed that the outreach has gone up, as well as our need to intervene in efforts to resolve glaring flaws or security lapses.

A common scenario is this: A customer, patient, or user of a website or service finds a security bug exposing their private data, or the data of someone else, but they have no way to alert the company or vendor to the issue. Oftentimes, the bug is simple to find or exploit, enough so that the person feels compelled in good faith to try to alert someone to the problem.

But customer support agents are not typically trained to handle security issues, and companies have retaliated in the past against people who find security bugs, which might understandably deter some from drawing attention to themselves.

That's why people who find these issues go to the media, usually when they feel they have no other option.

And so, the reporter contacts the company's public relations department, they realize that word is starting to get out about the thing they tried to ignore, the company fixes the problem (or not), and issues a statement (...or not). The reporter publishes a story about the lapse and the company gets a reputational bruising.

On the other hand, some people have no means to contact a reporter, leaving the issue unresolved and potentially maliciously exploited down the line. The company eventually gets hacked or breached, and then even more media end up writing about it.

Not having a dedicated security email address on a company or organization's website makes it far more difficult for people to report security issues in their products, website, or infrastructure.

I hear this gripe consistently from security researchers and almost anyone else who has discovered a security bug. Most of the time, people just want to be able to alert the right person to the problem, maybe get a "thank you" in return, and be on their way.

Here are a few examples of when companies don't handle their scandal:

- Fashion retail giant Express publicly exposed the order details of every customer who made a purchase, including their personal information, home address, and what they bought. A customer found the bug but found no way to alert the company, so he flagged with me for help. The bug was easily scriptable, so it was plausible that someone could have mass-scraped people's order details. Express fixed the bug, but wouldn't commit to notifying affected customers.

- Security researcher Eaton Zveare found cargo shipping tech firm Bluspark was exposing the plaintext admin passwords of their shipping systems to the web in plaintext, but had no way to contact the company. Zveare asked me to reach out. I finally heard back… from the company's lawyers. The issue was fixed in the end, but I dread to think how anyone else might have handled receiving an email from a lawyer just for trying to do the right thing,

- Big box retailer Home Depot left sensitive private keys to its internal systems publicly exposed for a year. But when security researcher Ben Zimmermann found them online and tried to alert the company, including its top cybersecurity official, Home Depot did not respond. The retailer eventually nuked the keys, but said nothing else of the incident.

- A couple of notable security bugs in a payment system used across North American public transit systems remain unfixed after the tech company behind the payment system ignored multiple emails from both me and a customer. I also asked city officials at one of the affected transit systems to reach out, and they said they hadn't heard back from them either.

Though, it's not all doom and gloom. There are some rare wins:

- Laundry servicing giant CSC ServiceWorks implemented a vulnerability disclosure policy after two college students uncovered a security bug that allowed anyone to do their laundry for free, the on-campus equivalent of hitting the jackpot. The duo tried to alert the company but got nowhere — and neither did I — until after my story was published and the company realized it had made a mistake.

- And, recently: The maker of a dental practice management system, Practice by Numbers, used in thousands of dentist's offices around the U.S., vowed to update its website to allow reports of future security issues. This comes after one patient, Joseph R. Cox, found his private medical records were exposed to other users of the company's patient portal.

A plea from me: Please make it easier for hackers, security researchers, but frankly anyone to contact your company or organization about security issues.

A contact form is not enough. A general email address is also not enough. Instead, consider a dedicated security email address on your website as a great place to start, as this actively signals to the outside world that you are open to feedback. (Tech company Ghost, which hosts the backend for this website, has a security page if you ever find a security bug on mine, for example.)

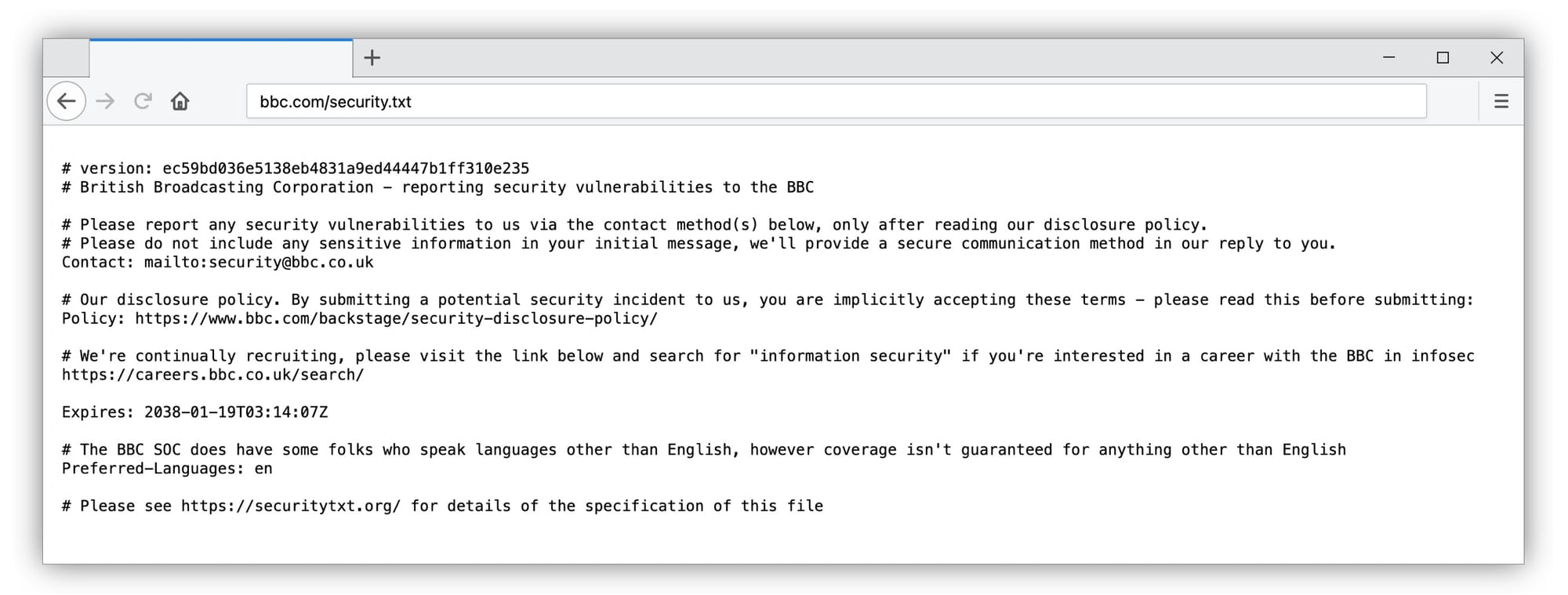

Another easy and effective way to allow people to find your dedicated security email address is to use security.txt, a simple concept that consists of putting a small text file in a known area on your website so that security researchers can know where to look and find the dedicated security email. The idea for security.txt originated from security researchers who wanted to make it easier to identify an email address of someone at the organization capable of handling bug reports.

Take a look at the BBC's version of security.txt, which is a great example of how the broadcaster guides people on where to report security flaws across the BBC's domain.

Your company's decision to take this approach ultimately rests with leadership. If you're in executive management or able to effect change at your company, please make the case for this. As I sometimes say, share this blog post with them if it's more time-efficient — as this issue goes to the core of how a company operates and publicly presents itself.

Yes, it's more work. And yes, you have to fix bugs that you might otherwise not be aware of. Having a dedicated security email address is a strong start for reactively fixing issues, and it can open the door for greater collaboration with the security research community down the line. This could include things like establishing a proper vulnerability disclosure program, where you publicly outline the scope in which you want people outside your organization to prod and poke at your front-facing systems to find bugs. And, having a bug bounty program in place can financially reward people for finding these flaws, which is another way to give back to the community.

As one person (whose social account is private) shared with me recently regarding this issue, simply having a dedicated channel for folks to report issues to a person capable of handling security requests "is a force multiplier in reputational and risk hygiene."

~this week in security~ is my weekly cybersecurity newsletter supported by readers like you. Please consider signing up for a paying subscription starting at $10/month for exclusive articles, analysis, and more, like:

Security precautions to consider while traveling through airports

Age verification laws threaten everyone's online security and privacy

Why your doctor's AI recorder can be bad for your health (and privacy)

How hackers are helping criminal gangs hijack truck deliveries

Here's another way to look at it.

If, as a corporate executive, your fear is that one day you're going to hear from me (or any other journalist for that matter), the irony is that you might never hear from me about whatever bug, flaw, or lapse you had — if you have a dedicated security email address on your website.

That's because if you make it easy for people to reach out to you, people will! It might not stop every hack or data breach, but at least you are giving people the opportunity to alert you first.

You might also not prevent the news from ever getting out but this can be a net-positive experience, and doesn't have to be a PR disaster. You should be proud of taking on this challenge and that you care about your company and customers enough to protect their information.

Sharing in the now-safe aftermath of an incident can be helpful for anyone to learn from. It's not the security lapse or the vulnerability that's the issue; how it's handled matters most from a reputational point of view. Once the issue is fixed, actively sharing what you learned can be enormously helpful and a powerful thing — especially to help others identify similar issues.

As the saying goes, "it takes a village," and that's especially true in cybersecurity, where everyone is fighting the same battle.

Thank you so much for reading ~this week in security~. If you liked this article, please share it! Feel free to reach out with any feedback, questions, or comments about this article: this@weekinsecurity.com.